Ollama

The Ollama integration adds a conversation agent in Home Assistant powered by a local Ollama server.

Controlling Home Assistant is an experimental feature that provides the AI access to the Assist API of Home Assistant. You can control what devices and entities it can access from the exposed entities page. The AI is able to provide you information about your devices and control them.

此集成不与句子触发器集成。

此集成需要外部 Ollama 服务器,该服务器适用于 macOS、Linux 和 Windows。按照下载说明安装服务器。安装后,将 Ollama 配置为可通过网络访问。

Configuration

To add the Ollama service to your Home Assistant instance, use this My button:

Manual configuration steps

- Browse to your Home Assistant instance.

- Go to Settings > Devices & services.

- In the lower-right corner, select Add integration.

- From the list, select Ollama.

- Follow the instructions on screen to complete the setup.

Options

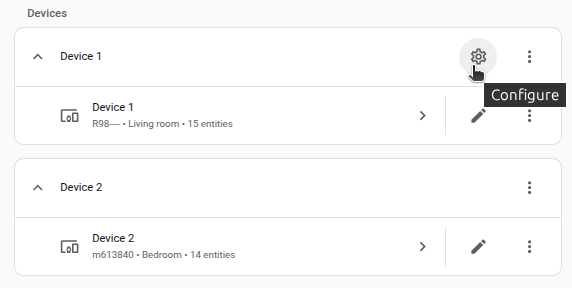

To define options for Ollama, follow these steps:

-

In Home Assistant, go to Settings > Devices & services.

-

If multiple instances of Ollama are configured, choose the instance you want to configure.

-

On the card, select the cogwheel

. - If the card does not have a cogwheel, the integration does not support options for this device.

-

Edit the options, then select Submit to save the changes.

Controlling Home Assistant

如果您想使用 Home Assistant 尝试本地 LLM,我们建议公开少于 25 个实体。请注意,较小的模型比较大的模型更容易出错。

只有支持工具的机型才能控制Home Assistant。

较小的模型在控制时可能无法可靠地维持对话 家庭助理已启用。但是,您可以使用多个 Ollama 配置 共享相同的模型,但使用不同的提示:

- 添加 Ollama 集成,无需启用 Home Assistant 的控制。你可以使用 该对话代理进行对话。

- 添加额外的 Ollama 集成,使用相同的模型,实现对 Home Assistant 的控制。 您可以使用此对话代理来控制 Home Assistant。